Consistency Isn’t Binary — There Are At Least Six Levels Between “Strong” and “Eventual”

Most engineers learn two consistency models: strong (linearizable) and eventual. Maybe three, if someone mentions “tunable consistency” from Cassandra. Then they treat every design decision as a binary choice between “correct but slow” and “fast but stale.”

There’s an entire spectrum between those endpoints, and the models in the middle are where real systems actually operate.

Linearizability is the top. Every read returns the most recent write, globally, as if there’s a single copy. This is what people mean by “strong consistency.” It requires coordination on every operation — typically quorum reads and writes, or a consensus protocol like Raft.

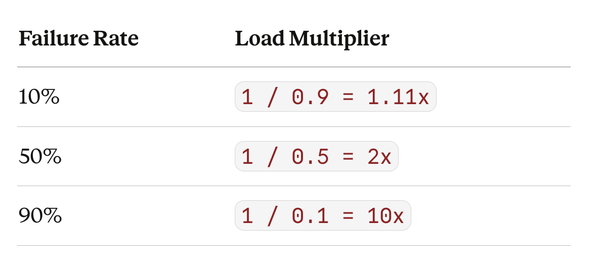

Cost: every read and write pays the latency of the slowest participating replica.

Sequential consistency relaxes one constraint. Operations from a single process appear in the order they were issued, and all processes see the same total order — but that total order doesn’t have to match real-time. A write at 12:00:00 could appear to happen after a write at 12:00:01, as long as every observer sees them in the same sequence. This was the original model for shared-memory multiprocessors (Lamport, 1979). Cheaper than linearizability because it drops the real-time ordering requirement.

Causal consistency relaxes further. If operation A could have influenced operation B (A happened before B and B could have observed A’s result), then everyone sees A before B. But concurrent operations — ones with no causal relationship — can appear in different orders to different observers. This captures the intuition that “if you saw my message and replied, everyone should see my message before your reply” without requiring global ordering of unrelated events. COPS and Eiger implement this. Substantially cheaper than sequential because only causally-related operations need coordination.

PRAM (Pipelined RAM) consistency requires that operations from a single source are seen in order by all observers, but different sources can be seen in different orders. You’ll always see my writes in the order I issued them. But you might see my writes interleaved with someone else’s writes differently than a third observer does. This combines three sub-guarantees: monotonic reads (once you see a value, you never see an older one), monotonic writes (your writes apply in the order you issued them), and read-your-writes (you always see your own most recent write).

Read-your-writes is the guarantee most applications actually need and the one Dynamo-style systems often provide. You see your own updates immediately. Everyone else sees them eventually. Session-scoped consistency in DynamoDB and Cosmos DB typically means this. It’s cheap — route your reads to the replica that received your write, or track a logical timestamp — and it eliminates the most visible class of staleness bugs (“I updated my profile but the page still shows the old name”).

Eventual consistency is the floor. Replicas converge eventually. No ordering guarantees. No staleness bounds. In practice, “eventually” can mean milliseconds or hours depending on replication lag, network partitions, and conflict resolution strategy. Anti-entropy protocols, read-repair, and merkle trees get you there, but they don’t tell you when.

Bounded staleness sits between eventual and the others by adding a time constraint: reads are guaranteed to be no more than t seconds behind the latest write. Cosmos DB offers this as “bounded staleness” with a configurable lag. This gives you a quantitative SLA on staleness, which eventual consistency doesn’t — and it’s substantially cheaper than causal or sequential because the bound is temporal, not causal.

The hierarchy forms a lattice, not a line. Causal implies PRAM. PRAM implies monotonic reads, monotonic writes, and read-your-writes individually — but having all three of those doesn’t give you causal. Sequential implies causal. Linearizable implies sequential. Each level drops a specific constraint and gains performance.

The design question for any piece of data isn’t “strong or eventual?” It’s “which guarantees does this access pattern actually require?” A social media feed needs monotonic reads (scrolling backward shouldn’t show a post disappearing). A collaborative editor needs causal consistency (your reply to my comment should always appear after my comment). A bank ledger needs linearizability (two balance checks one second apart should never go backward). A recommendation engine needs nothing — eventual is fine.

Production systems that mix consistency levels per data path outperform systems that pick one model globally. The spectrum exists because different data has different costs of staleness, and the right model minimizes total cost — coordination overhead on the write path plus wrongness cost on the read path.

Related Posts :