Load Balancing Doesn’t Balance Load

Load Balancing Doesn’t Balance Load

Round-robin assigns requests evenly. Least-connections sends to the server with the fewest active connections. Power-of-two-choices picks two servers at random and sends to the less loaded one. All three algorithms balance request assignment. None of them balance load.

Load is the resource consumed by a request: CPU time, memory allocation, I/O wait, database queries, downstream calls. A request that reads from cache costs 2ms of CPU and 0 I/O. A request that triggers a complex aggregation costs 500ms of CPU and four database round-trips. Your load balancer treats them identically — both are one request.

Round-robin with 4 servers: server A gets requests 1, 5, 9. Server B gets 2, 6, 10. Looks balanced. But if requests 1, 5, and 9 are all cache misses hitting the database, while 2, 6, and 10 are cache hits, server A is doing 100x the work of server B. CPU at 90%, B at 3%. The load balancer thinks it’s doing its job.

Least-connections is better but still wrong. It sends new requests to the server with the fewest active connections — a reasonable proxy for “least busy.” But connections aren’t load. A server can hold 200 idle WebSocket connections and be at 1% CPU. Another server can have 5 connections all executing heavy queries at 95% CPU. Least-connections picks the second server. The proxy is broken for heterogeneous workloads.

Power-of-two-choices (used by Nginx, HAProxy, and most modern load balancers) is the best general-purpose algorithm. Pick two servers at random, send to the one with fewer connections. The mathematical insight (Mitzenmacher, 2001) is that this achieves exponentially better load distribution than random selection, with minimal overhead. But it still uses connection count as the load metric. Same blind spot.

What would actual load balancing look like? The load balancer would need to know the cost of each request before routing it. That requires application-level information — which endpoint, which parameters, what cache state — that the load balancer doesn’t have. Or it would need real-time resource telemetry from each server: actual CPU utilization, memory pressure, I/O wait. Some service meshes expose this (Envoy can weight based on health checks with degraded states), but the reporting lag means you’re always balancing based on what load looked like 5-10 seconds ago, not what it is now.

The practical gap: the load balancer operates at L4 or L7 and makes a routing decision in microseconds based on connection metadata. Actual load is an application-level concept measured in milliseconds. The two systems operate at different timescales with different information. This is a fundamental impedance mismatch, not a configuration problem.

Adaptive load balancing narrows the gap. Track request latency per backend. If server A’s P50 latency rises while B’s stays flat, route away from A. Latency is a better proxy for load than connection count because it reflects what the server is actually experiencing. Netflix’s internally-developed load balancer uses response-time-weighted routing for this reason. But latency is still a trailing indicator — by the time latency rises, the server is already overloaded.

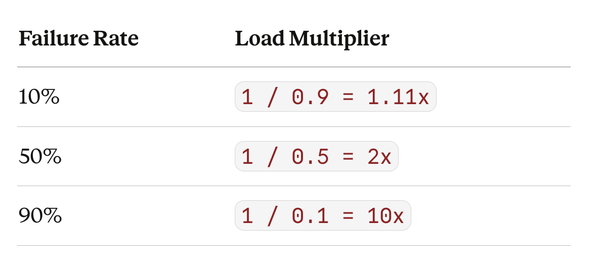

The honest assessment: load balancers distribute requests. Whether that distributes load depends on whether your requests have uniform cost. When they don’t — and they almost never do in production — “balanced” request distribution coexists with wildly unbalanced resource utilization. Knowing this changes how you design capacity: provision for the worst-case request distribution, not the average.